How to Convert SDR to HDR : Three Methods & Step-By-Step Guide

Summary: Curious about turning SDR footage into stunning HDR? This guide explains the key differences between SDR and HDR, why upgrading SDR to HDR matters, and how to convert SDR to HDR easily. Discover three proven methods—including AI-powered SDR to HDR converters—with step-by-step instructions to boost color, contrast, and overall video quality—no expert skills required.

Table of Contents

Before jumping from the SDR to HDR converter for any video upgrade, you must have clarity about HDR, how it is different from HDR, why you need HDR, and many other factors. Once you have a brief idea about what and why you are heading to, it's time to pick the most powerful SDR to HDR converters to upscale the video quality as per your requirements. Here, you will get an overall idea about SDR to HDR with three prominent SDR to HDR converters.

What is SDR and HDR

Before diving into SDR or HDR, it's important to distinguish between SD (Standard Definition) and HD (High Definition).

SD vs HD

SD (Standard Definition): Refers to a lower resolution, typically associated with analog display quality.

HD (High Definition): Introduced during the shift to digital technology, HD offers higher resolution and greater pixel density compared to SD, providing a clearer and sharper visual experience.

What is SDR?

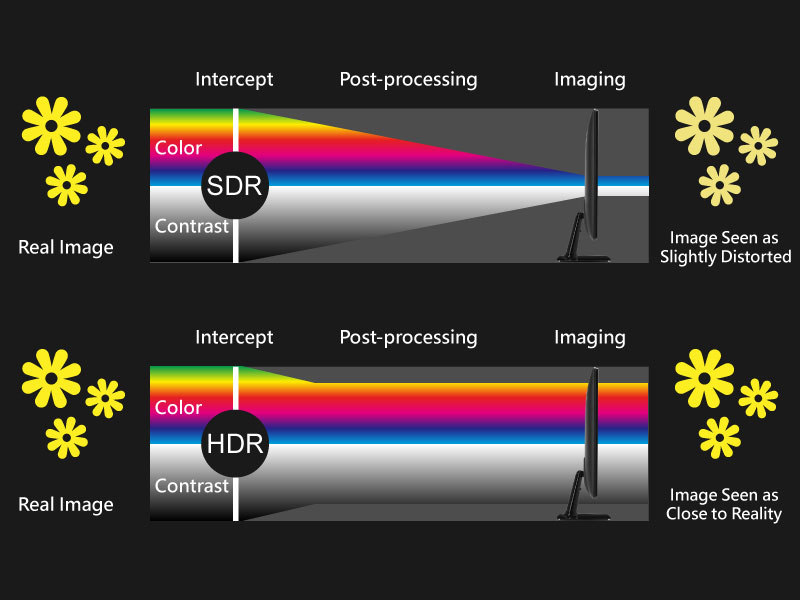

SDR (Standard Dynamic Range) defines video technology focused on light and color intensity within a limited range. It handles brightness, color, and contrast effectively but lacks the dynamic range and depth of newer technologies like HDR. SDR has been the standard for years in traditional displays.

What is HDR?

HDR (High-Dynamic Range) takes visual quality to the next level, offering:

- Broader color range for more realistic hues.

- Enhanced brightness and contrast to highlight finer details in both dark and bright scenes.

- HDR pairs with HD and UHD displays, leveraging the advanced capabilities of modern screens to create more immersive visuals.

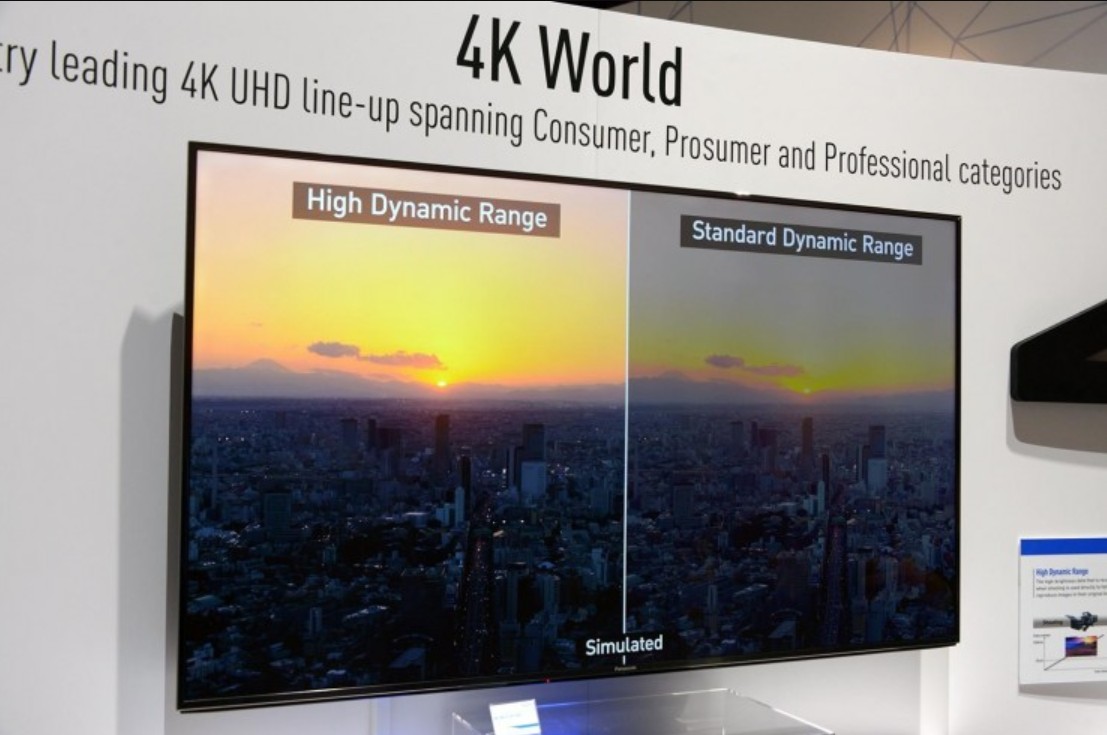

SDR vs HDR

- Brightness & Contrast: HDR significantly surpasses SDR in its ability to display a wider range of brightness levels and more detailed shadows.

- Color Range: HDR provides richer and more lifelike colors compared to SDR's limited palette.

- Visual Experience: HDR enhances realism and depth, making it ideal for modern content like films and games, while SDR remains a more basic standard for older content.

Understanding these differences helps in appreciating the technological advancements in video quality.

Why do you should convert SDR to HDR?

SDR and HDR are two video technology that tackle color clarity, exposures, light effects, detailed information of the image, and balancing light & shade. As the name suggests, HDR is the advanced version of the Standard one. When SDR is used in basic camera and video display devices, HDR is used in advanced display devices like smart TVs, smartphones, monitors, and digital equipment. So, let's see the parameters that will help you understand why you need convert SDR to HDR.

- • Balancing Color & Contrast

SDR refers to a limited color gamut & range with under-exposure brightness & contrast. HDR involves better exposure, color, brightness, and detailing of any video with dynamic color vibrancy and enhanced light & dark objects. HDR also adds 3D effects to any video.

- • Brightness & Dynamic Range

- In the SDR display devices, the brightness range occurs between 100 to 300 bits & the dynamic range is about 6 stops. In HDR, the brightness range could be adjusted between 1 nit to 1000 nits on scenes with a dynamic range of 17.6 stops.

- • Color Depth & Gamut

In SDR, the color depth range is from 8 to a maximum of 10 bits, and the color gamut range is Rec.709. In HDR, it is extended from 8 up to 12, and the color gamut range is Rec.2020.

- • File Size Support

SDR can't handle complex data with a huge file size. In contrast, HDR tackles large file sizes with more exposure and color detailing to add realistic appeal to any video. HDR also gives clarity to more visual detail in dark and bright areas in any video as compared to SDR.

- • Device Compatibility

To match the advanced technology in the digital media industry, most of the device displays are compatible with HDR video rather than SDR. No HDR-supported display wouldn't be able to give you HDR visual satisfaction with SDR-quality video.

3 Best SDR to HDR Converter to Upscale Video

When you know why you need to convert SDR to HDR, you should select the most appropriate SDR to HDR converter with maximum flexibility and customization. Here, you go with three methods to SDR to HDR Conversion.

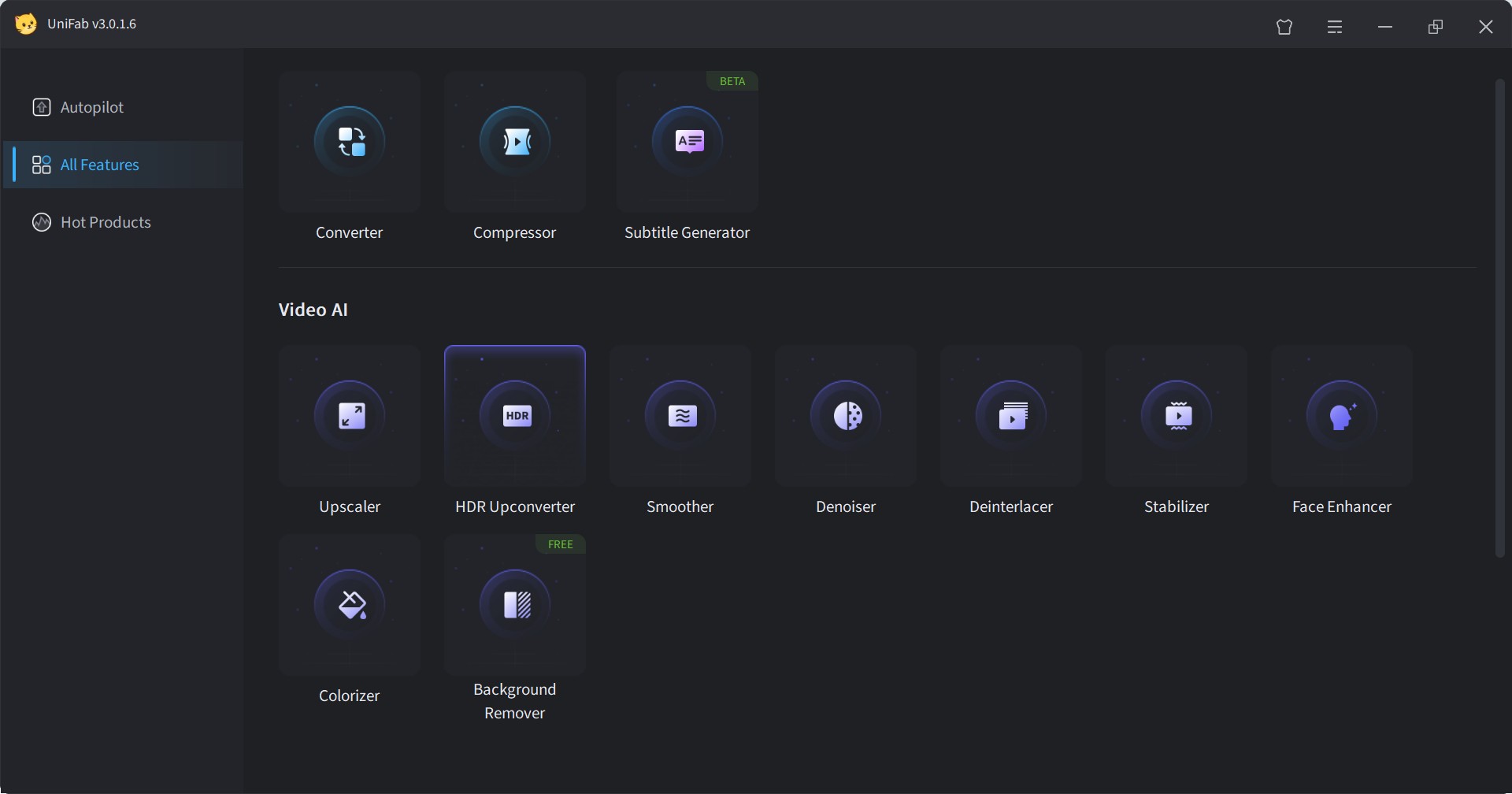

Method 1: Convert SDR to HDR Using UniFab HDR Upconverter AI

UniFab HDR Upconverter is an AI-powered tool built on a proprietary model trained with over 10,000 hours of cinematic footage across multiple generations. It supports HDR10 & Dolby Vision, accurately embedding HDR metadata for full display and MediaInfo compatibility. By intelligently enhancing color, brightness, and contrast, it delivers results visually close to HDR10 originals.

UniFab offers a user-friendly interface and a 30-day full-featured free trial with no watermark.

SDR to HDR with UniFab HDR Upconverter AI

Performance Highlights

- Perfect Balance of Light and Shadow — UniFab's SDR to HDR conversion delivers a wider dynamic range, capturing richer brightness layers to achieve an ideal balance between shadows and highlights, presenting visuals that closely mimic what the human eye perceives.

- Ultra-Fine Color Reproduction — The converted HDR videos cover a broader color gamut, faithfully restoring native HDR color distribution. Whether warm or cool tones, UniFab ensures vibrant and natural color rendering.

- Unlock Native-Level Details — UniFab's SDR to HDR retains up to 300% more detail, preserving pixel-level intricacies even in the darkest shadows and brightest highlights.

Key Features of UniFab HDR Upconverter AI:

- Supports SDR to HDR10/Dolby Vision Conversion

UniFab supports both HDR10 and Dolby Vision—two industry-standard HDR formats that enhance video with brighter highlights, richer colors, and better contrast.

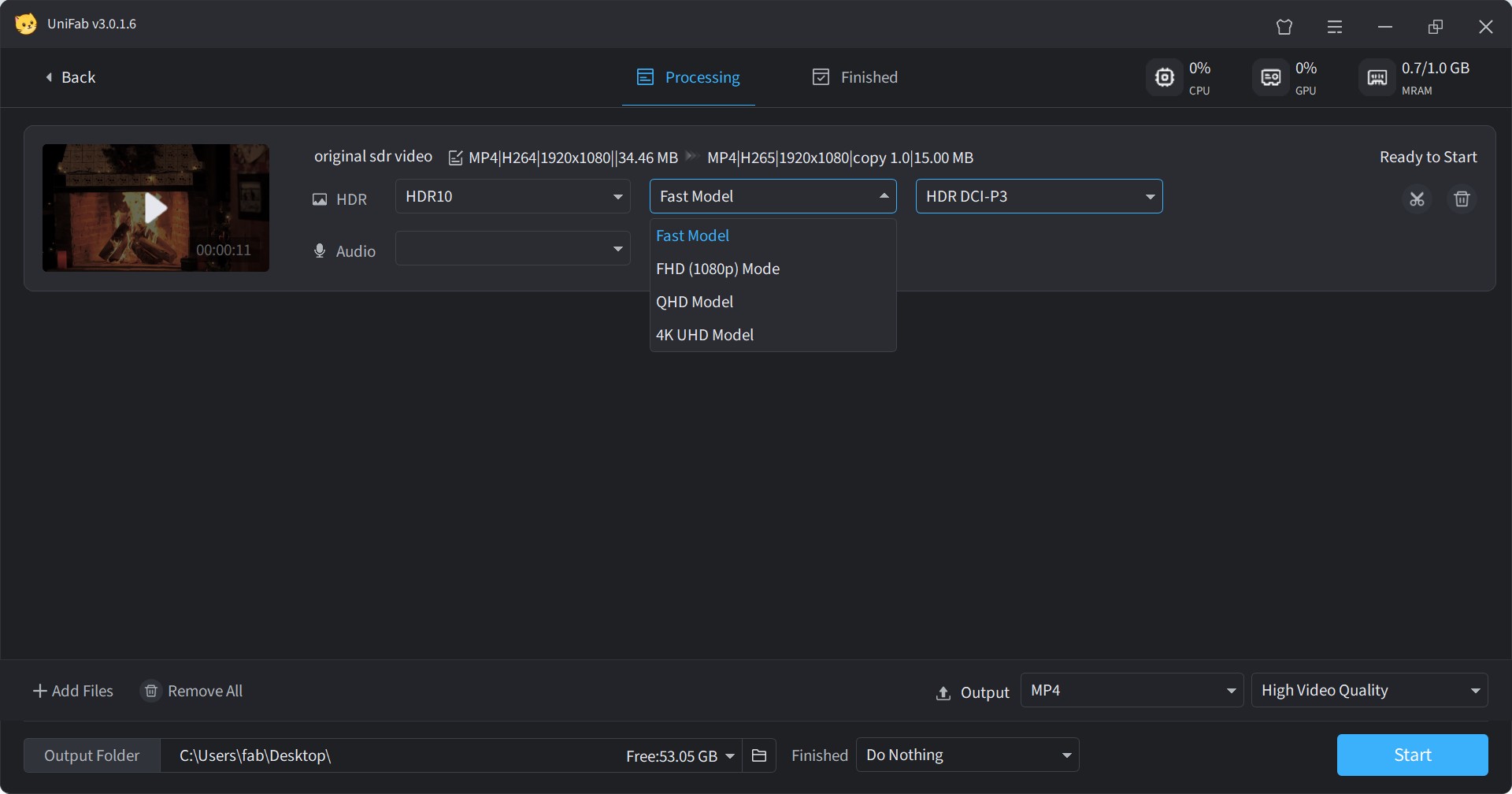

- Two Color Spaces Available: HDR DCI-P3 and HDR Rec.2020

Rec.2020 offers a wider color gamut than DCI-P3, but achieving its full potential requires high-end hardware. UniFab lets users choose the color space best suited to their display capabilities.

- Up to 50× Faster Conversion

Powered by advanced GPU acceleration, UniFab significantly reduces SDR-to-HDR processing time.

- Supports 4K UHD and QHD Models

SDR to HDR with new options for 4K UHD and QHD models.

- Fast and High-Quality Modes

Choose between Fast Mode for quick conversion or High-Quality Mode for the best visual results.

- Built-in Editor & Custom Output Settings

Trim videos and customize output format, resolution, audio, color space, and more—all within the same interface.

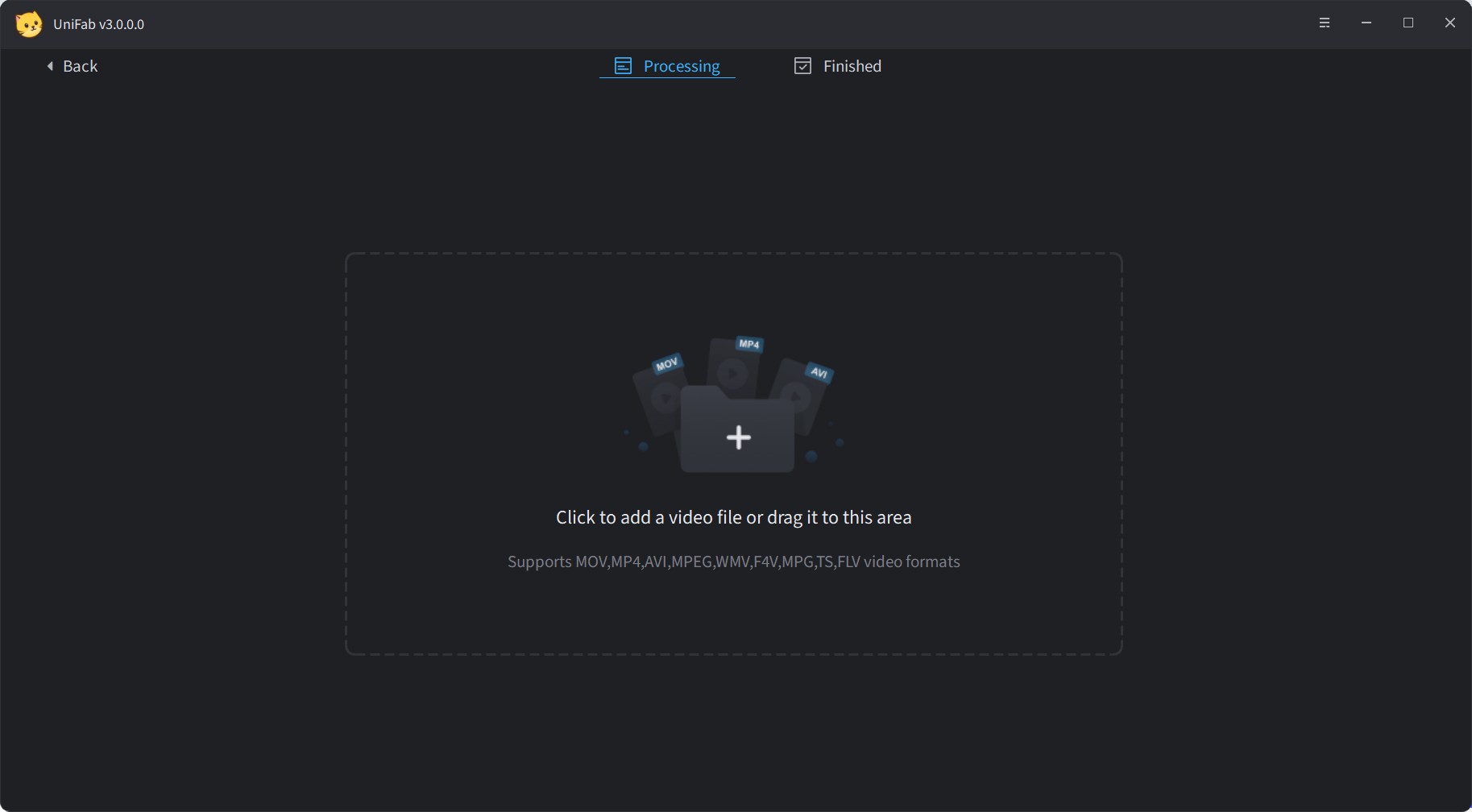

How to Convert SDR to HDR with UniFab?

Once you launch the UniFab tool on your system, click on 'All Features', and select the “HDR Upconverter” mode from 'Video AI' section.

Click the '+' button to upload your source video, or drag and drop the file directly.

Upscale your SDR video to HDR10 or Dolby Vision. Customize model, color space, format, quality, audio settings, and other preferences as needed. Once set, click “Start,” and the UniFab AI tool will handle the upscaling automatically.

Method 2: Convert SDR to HDR Using a Encoding Tool: EncodeGUI

To look for SDR to HDR converter, you can try the free, open-source, third-party AI-based video transcoder GUI, Encode GUI. It gives you a seamless video encoding and decoding experience with AI automation. So, while offering an advanced encoding process, this tool provides multiple filters, such as SDR to HDR, for resolution upscaling, Frame interpolation, DeNoise, etc. So, let's see what benefits you can explore with this tool.

Benefits

- This SDR to HDR supports all devices compatible with around 10 formats like MKV, Mp4, FLV, etc

- Explore the live-encoding process with a preview option

- You can retrieve the advanced metadata for the source video

- You can enjoy SDR to HDR conversion with batch processing

- Use this light-weight, easy-to-use tool without any prior video-encoding experience

Limitations

- Available only for Windows

- There is no video customization or editing option

- There are no color-correction or special effect-adding options

- The SDR to HDR conversion process is very lengthy & complicated

How to convert SDR to HDR with EncodeGUI?

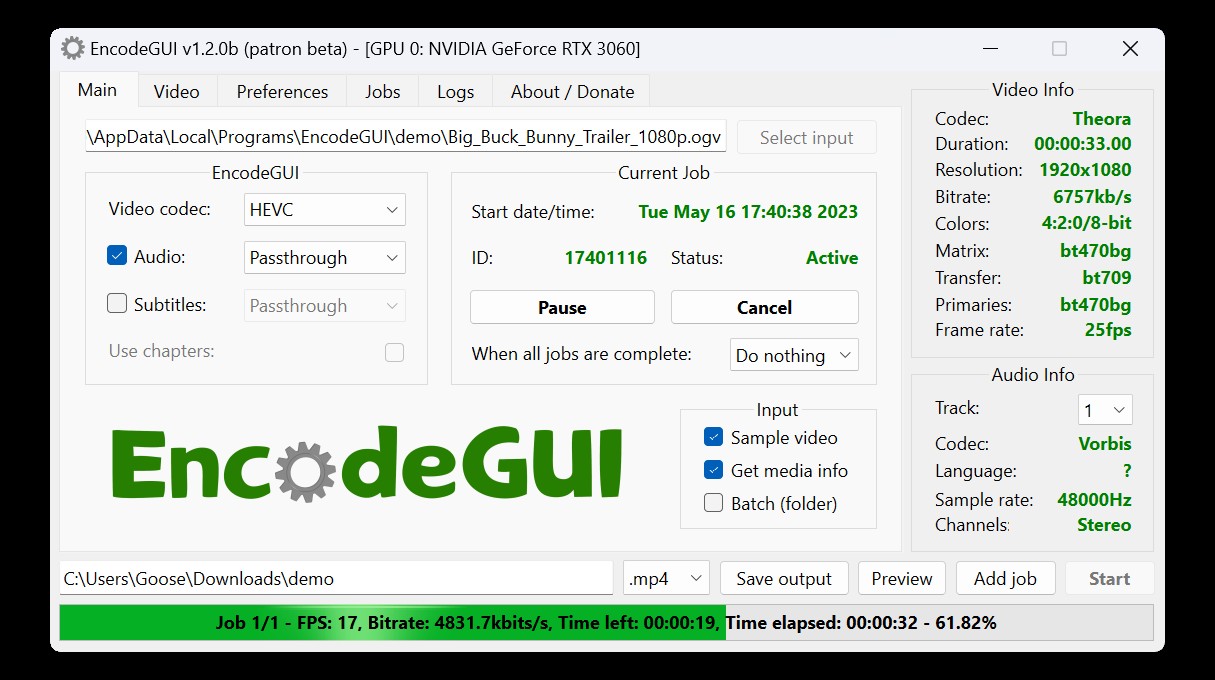

Step 1: Download & launch the Encode GUI v.1.0.5 on your system following the given instructions. Next, select the source video > change the encoding settings.

Step 2: Select video codec to HEVC > select CRF value to 17 > select profiling & change setting to main 10 & unlimited Level > select bit depth to 10-bit.

Step 3: Select VapourSynth tab > Color > activate the “Color Space Converter” option. Then, change the Input Matrix, Transfer, and Primaries according to the “Video Info” details in the right section.

Step 4: If you find any missing color space details in the video information, select the element to “BT470bg”. Set the parameters as it is given in the “OutPut” section under the “Color Space Converter” option so that you can make the file HDR-compatible.

Step 5: Head to the taskbar again to select the “Signal” option > Enable Color > HDR10 Metadata. Next, select parameters for Color Matrix, Transfer & Primaries according to the settings option shown in the picture.

Step 6: Finally, select the “Preview” option to verify your selection, and then select the “Start” option to initiate the SDR to HDR conversion process.

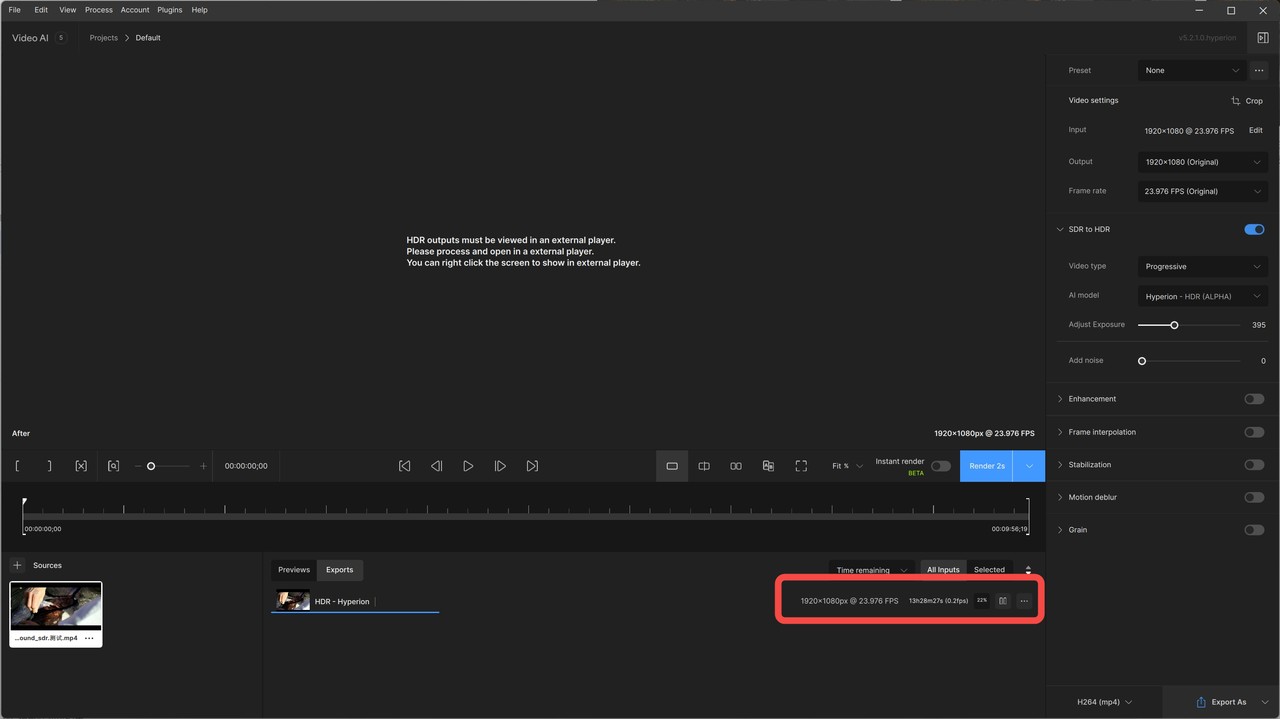

Method 3: Convert SDR to HDR Using Topaz Video AI:Hyperion Model

Topaz Video AI is a cutting-edge solution for enhancing video content.

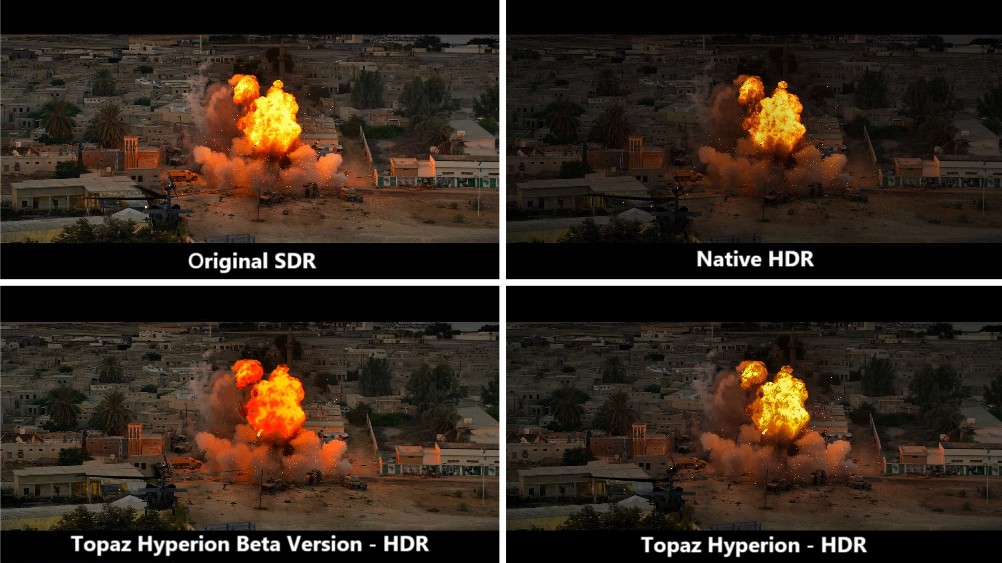

In July 2024, Topaz introduced the beta version of its Hyperion model for SDR-to-HDR conversion. During our initial testing of the beta version, we observed the following:

- Basic Brightness Adjustment: The model primarily made simple brightness changes, resulting in output that looked similar to the original SDR video.

- Excessive Visual Saturation: The output appeared overly saturated, especially with brightness and warm tones often overflowing, leading to an unnatural visual effect.

After several rounds of updates and improvements, the official version was released in November 2024. We retested the updated model, and here's what the final Hyperion version now offers:

- Optimized highlight handling to reduce artifacts and overexposure

- Support for HLG/PQ HDR standards to ensure broader HDR device compatibility

- "Boost Saturation" introduced for more accurate and natural color reproduction

- Adjustable highlight threshold to minimize edge artifacts and HDR color distortion

- Automatic color enhancement to prevent oversaturation or harsh transitions in HDR conversion

Benefits

- Significant Brightness and Contrast Enhancement: Overall image becomes sharper and more visually striking.

- Improved Shadow Areas: Shadows are noticeably brightened, enhancing visibility in dark regions.

Limitations

- Overexposed Highlights: Bright areas (e.g., explosions) tend to be blown out, losing detail.

- Loss of Detail in Bright and Dark Areas: Enhanced contrast and saturation come at the cost of fine details, especially in highlights and shadows.

- Color Oversaturation: Warm tones may appear unnatural due to excessive color enhancement, leading to potential color clipping or overcorrection.

- Aggressive HDR Style: Less balanced than native HDR, potentially less suitable for natural or cinematic footage.

- Longer Conversion Time with Custom Settings: Tweaking advanced parameters can drastically increase rendering time.

- Lack of HDR Information: Exported videos do not display HDR information in MediaInfo.

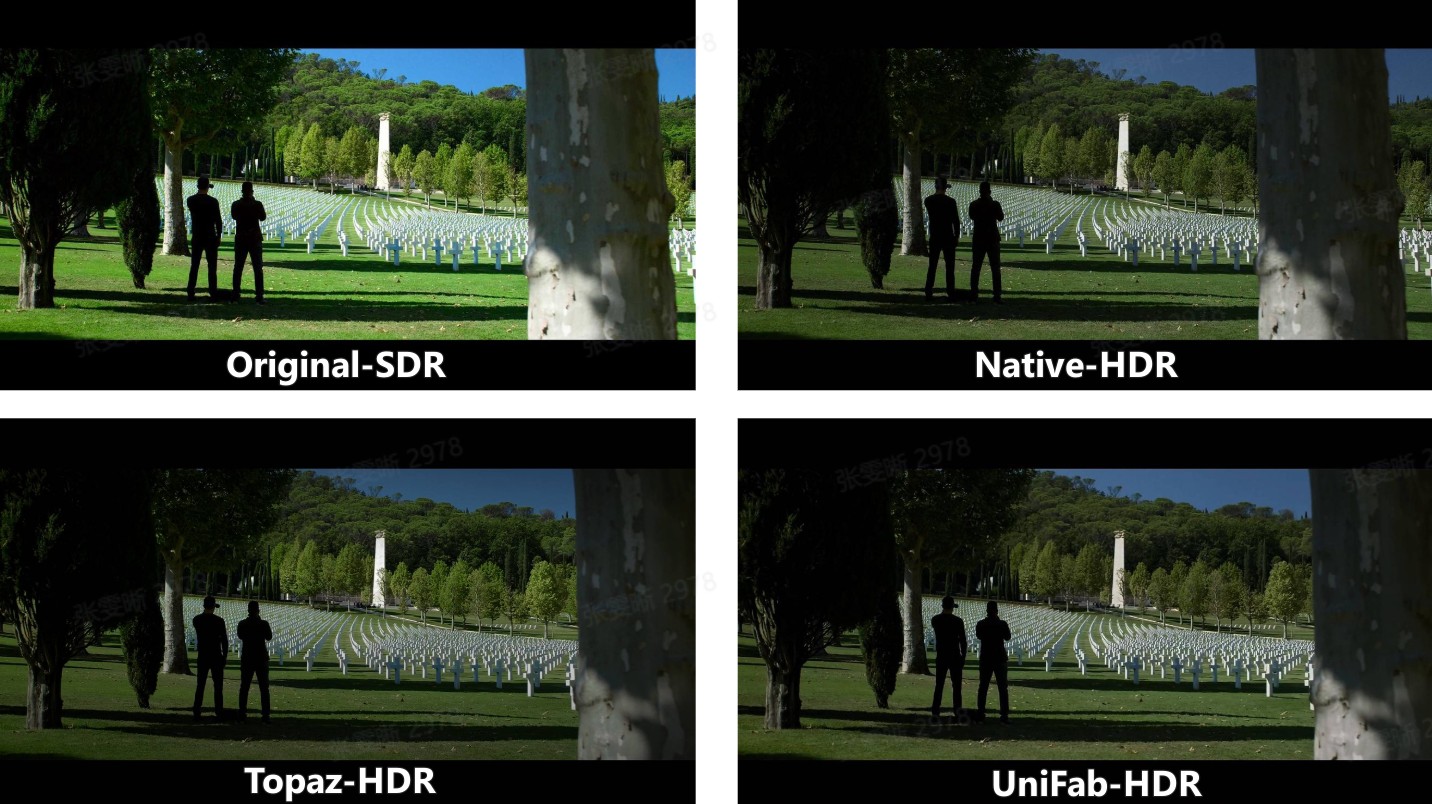

UniFab vs. Topaz - SDR to HDR Performance Comparison

We tested UniFab HDR Upconverter AI and Topaz Video AI's Hyperion model to evaluate their performance in terms of output quality and processing speed. Below are the test environment details and source video specifications:

Test Machine:

- GPU: NVIDIA GeForce RTX 4070

- CPU: AMD Ryzen 7 5700G with Radeon Graphics

Source Video:

- Duration: 9 minutes 57 seconds

- Resolution: 1920×1080

- Frame Rate: 23.976 fps

| Evaluation Aspect | Topaz Hyperion | UniFab HDR Upconverter |

|---|---|---|

| Brightness & Contrast | Strong lift in brightness and contrast; dark areas are noticeably brightened, but highlights can become overblown. The result appears sharp but sacrifices detail. Tends to be more aggressive than native HDR. | Slight increase in contrast with a softer visual tone. Overall look is closer to native HDR, though highlights may appear dimmer with less clarity. |

| Color Reproduction | Colors are intense, especially warmer hues, which can cause oversaturation or unnatural tones. | Color enhancement is more restrained, maintaining a tone distribution more aligned with real HDR output. |

| Saturation | High saturation levels create vivid visuals, but the result may feel exaggerated or less natural. | Moderate saturation boost offers a more balanced appearance that stays faithful to native HDR aesthetics. |

| Detail Preservation | Highlight and shadow details are often lost due to extreme contrast and color boosting, affecting texture realism. | Midtones and shadows retain better clarity, though highlights may lack detail and show minor distortions. |

| Conversion Time | Around 1–1.5× the video duration with default settings. Processing time increases steeply with manual adjustments. | Fast mode processes close to real-time. High-quality mode takes about 2.5× video duration, offering a more predictable timeframe. |

| HDR Metadata Support | Not trigger HDR indicators on devices or be reflected in file metadata. | Accurately embeds HDR metadata — HDR flags are shown both on HDR-capable TVs and in MediaInfo analysis. |

| Pricing (Lifetime) |

Limited free trial $299 |

30-day Free Trial for full features, without watermark $119 |

Conclusion

If you don't want to compromise with the viewing experience of your favorite home videos, movie collections, or office presentation videos, the SDR to HDR converter is the best option. To optimize your various video editing and customization requirements, you should go for the converter cum video upscale, two-in-one program, the UniFab HDR Upconverter AI.

FAQs about SDR to HDR Conversion

Can you convert video to HDR?

Yes, you can convert video to HDR. Tools like UniFab HDR Upconverter AI use AI and inverse tone mapping to expand dynamic range, enhance brightness and contrast, and add HDR-like color depth to SDR videos. It also accurately embeds HDR metadata, so HDR flags are recognized on HDR-capable TVs and shown in MediaInfo analysis.

Does SDR to HDR conversion loss video detail & data?

No, because of SDR to HDR video upscaling, your poor-quality video data and details will become more prominent, vibrant, and visible while balancing colors, brightness, and light. Instead of losing data or compromising quality, the SDR to HDR conversion enhances the video quality with realistic appeal. If you want to know how to convert HDR to SDR, refer to this post.

To look for a HDR software, you will get very limited options with many complicated steps and minimal video customization options. In that case, you must try the most powerful and hassle-free SDR to HDR converter, the Unifab HDR Upconverter AI, with the ultimate video editing & customization option.

How to change monitor from SDR to HDR?

To switch from SDR to HDR, make sure your monitor supports HDR and your graphics drivers are updated. Then, go to your system display settings (e.g., Windows Settings > Display), select your HDR-capable monitor, and turn on "Use HDR." Also enable HDR in your monitor's on-screen menu if needed. Play HDR-compatible content to enjoy enhanced brightness, contrast, and color.